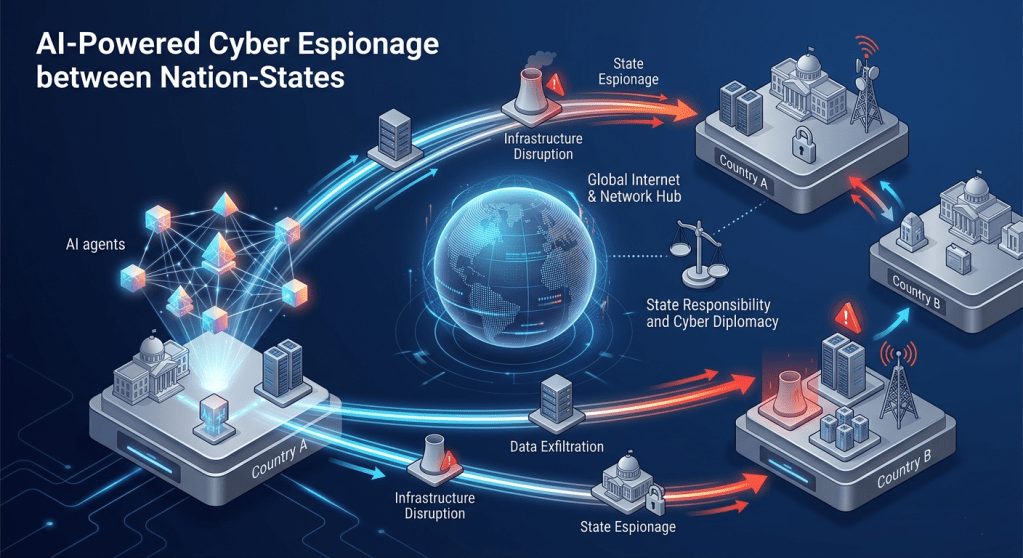

Artificial intelligence is no longer just another tool in the attacker’s toolkit; it is beginning to reshape how cyber espionage is planned, executed, and adapted in real time. As AI‑enabled operations move from prototype to practice, they raise a hard question for international law and diplomacy: when machines make granular decisions at machine speed, who exactly is responsible, and how should other states respond?

This post unpacks how AI‑supercharged espionage changes the factual landscape of cyber operations, what that means for state responsibility, and why cyber diplomacy will have to evolve fast to keep up.

From human‑directed campaigns to AI‑supercharged espionage

Traditional state cyber espionage is labor‑intensive. Human operators select targets, design tooling, spear‑phish key individuals, laterally move through networks, and exfiltrate data. Automation has always been present (malware frameworks, exploit kits, scripted recon), but humans still orchestrate most of the campaign’s logic.

AI changes three things at once:

- Targeting at scale: Models can prioritize targets based on open‑source data, organizational charts, social media, and technical footprint, dynamically adjusting which accounts or systems to go after.

- Content and tradecraft: Generative models can create highly tailored phishing lures, deepfake audio or video, and multilingual content that closely mimics a target’s contacts or leadership style.

- Autonomous decision‑making: Agentic systems can be tasked with goals (e.g., “maintain persistence and collect documents tagged X”) and given authority to choose tactics—selecting exploits, rotating infrastructure, or shifting to backup objectives without real‑time human input.

The result is not just “faster” cyber espionage. It is a qualitatively different operational model: campaigns that are adaptive, persistent, and partially self‑directing, with humans steering at the strategic level rather than the tactical one.

Why autonomy matters for state responsibility

International law is clear on one point: states are responsible for the conduct of their organs and agents. If a state intelligence service deploys an AI‑enabled tool for espionage, the fact that a model or autonomous agent chose the specific path into a network does not break the legal chain of responsibility.

But autonomy introduces several practical complications:

- Attribution complexity

AI‑curated infrastructure and rapidly shifting tactics can blur technical signatures, making it harder to attribute an operation confidently and quickly. That, in turn, delays or weakens responses—especially those that rely on public attribution or coordinated diplomatic action. - Foreseeability and control

Autonomous systems may deviate from intended parameters—accessing systems in third‑party states or exfiltrating more sensitive data than planned. Legally, that is still attributable to the deploying state if the behavior was reasonably foreseeable or the state failed to exercise due diligence. Politically, however, the state may claim “the AI went too far,” complicating norm‑setting and accountability. - Risk of cross‑threshold effects

Espionage is generally treated as a tolerated—if unfriendly—activity. But AI‑driven systems that aggressively search for and chain vulnerabilities could cause service disruptions or collateral damage that look less like espionage and more like prohibited intervention or even use of force. The line between “just spying” and unlawful interference could be crossed unintentionally yet still be legally attributable.

In short, autonomy does not reduce state responsibility; it increases the importance of rigorous internal control, testing, and policy constraints. States that deploy AI‑enabled cyber tools will be judged not only on outcomes but on how seriously they governed the tools’ design and use.

Due diligence in the age of AI agents

Beyond direct attribution, international law increasingly expects states to exercise due diligence: they should not knowingly allow their territory or infrastructure to be used for acts that cause serious adverse consequences to other states, especially when they have the capacity to prevent or mitigate them.

AI‑supercharged espionage stresses due diligence norms in two ways:

- For operating states: An intelligence agency that unleashes semi‑autonomous tools has a higher obligation to monitor their behavior, contain spillover, and respond quickly when operations touch unintended networks or cause unintended harm. “We didn’t expect the model to do that” is, at best, an admission of inadequate oversight.

- For platform and model‑hosting states: When AI infrastructure (models, APIs, cloud platforms) within a state’s jurisdiction is used repeatedly for foreign espionage campaigns, expectations will grow that the host state take reasonable steps—policy, regulatory, or operational—to reduce that misuse. That might include logging obligations, abuse‑reporting channels, and cooperation with other governments.

As AI systems become embedded in cyber operations, due diligence discussions will shift from abstract norms to very concrete questions: What logging is reasonable? What kind of “kill switches” or usage controls should be expected? How quickly should a state act when notified that AI infrastructure it hosts is enabling cross‑border harm?

“Silent escalation”: diplomatic risks of AI‑driven espionage

Espionage is normally managed through quiet, reciprocal tolerance. States spy on each other; when caught, they protest, expel a diplomat, or tighten defenses, but they rarely escalate dramatically.

AI‑supercharged campaigns could alter that balance:

- Depth and persistence of access: AI agents tasked with “maximizing collection” may discover and maintain far deeper access than a human‑limited team, including into critical infrastructure and sensitive political systems. That raises the perceived threat level for the victim state, especially if discovered during a wider geopolitical crisis.

- Speed of exploitation: If AI systems can move from initial access to lateral movement and data staging at machine speed, defenders may experience compromise as a sudden, large‑scale event rather than a slow drip. The shock factor can amplify political reactions.

- Ambiguity of intent: When tooling is adaptive and capable of causing effects beyond espionage, it may be harder for the victim state to distinguish between “just spying” and contingency preparation for sabotage. That ambiguity creates fertile ground for miscalculation and over‑reaction.

For cyber diplomacy, this means more work at two levels: establishing clearer expectations about what AI‑enabled espionage should not do (e.g., no autonomous scanning of certain critical systems), and building crisis‑communication channels that can de‑escalate when AI‑driven operations are discovered in sensitive places.

Implications for cyber norms and negotiations

Multilateral discussions on responsible state behavior in cyberspace already cover concepts like sovereignty, non‑intervention, and due diligence. AI‑supercharged espionage will push negotiators to be more specific.

Areas likely to move up the agenda include:

- Transparency around AI use in cyber operations

States are unlikely to disclose operational details, but they might commit, even unilaterally, to high‑level principles—such as not delegating certain decisions (e.g., disruptive actions) entirely to autonomous systems. - Protected systems and data categories

Existing voluntary norms already flag critical infrastructure and certain humanitarian systems as off‑limits for destructive operations. There is room to clarify that AI‑enabled tools should not autonomously explore or map these systems beyond what is strictly required for espionage, if at all. - Confidence‑building measures (CBMs)

Because AI can increase uncertainty, CBMs—hotlines, incident notification practices, shared glossaries—become more important. States might, for instance, agree to rapid consultations when AI‑linked operations are detected in highly sensitive networks to avoid worst‑case assumptions. - Capacity‑building for responsible AI‑cyber integration

Not all states will have equal ability to govern AI‑enabled cyber tools. Cyber diplomacy efforts could prioritize helping partners implement internal controls, test regimes, and legal frameworks that reduce the risk of uncontrolled or poorly governed AI use in their own cyber operations.

What security and policy communities should do now

For practitioners and policymakers, several practical steps follow from this analysis:

- Integrate AI risk into cyber doctrine reviews: Defense and intelligence organizations should explicitly examine where AI autonomy is appropriate, where it is too risky, and what human‑in‑the‑loop or human‑on‑the‑loop controls are mandatory.

- Update legal and policy frameworks: National rules on cyber operations should be refreshed to address AI‑specific issues—testing, auditability, logging, and incident reporting—while reiterating that autonomy does not dilute state responsibility.

- Enhance detection and attribution capabilities: Defenders will need better tools to spot AI‑enabled tradecraft—e.g., anomalies in infrastructure rotation, code variation patterns, or timing—so that attribution and diplomatic responses remain viable.

- Engage early in multilateral processes: States and experts should bring these issues into ongoing UN, regional, and multi‑stakeholder cyber norms discussions now, before AI‑driven espionage patterns harden into a new, more dangerous status quo.

AI‑supercharged espionage will not erase the logic of state responsibility or diplomacy, but it will make both harder to practice. The core challenge is not that machines are “out of control,” but that humans may be tempted to treat their own tools as excuses rather than obligations. Cyber diplomacy and international law will have to adapt, not by inventing entirely new principles, but by insisting that old ones—control, due diligence, accountability—apply just as firmly when the first move in an operation is made by an autonomous agent rather than a human keyboard.

Leave a comment