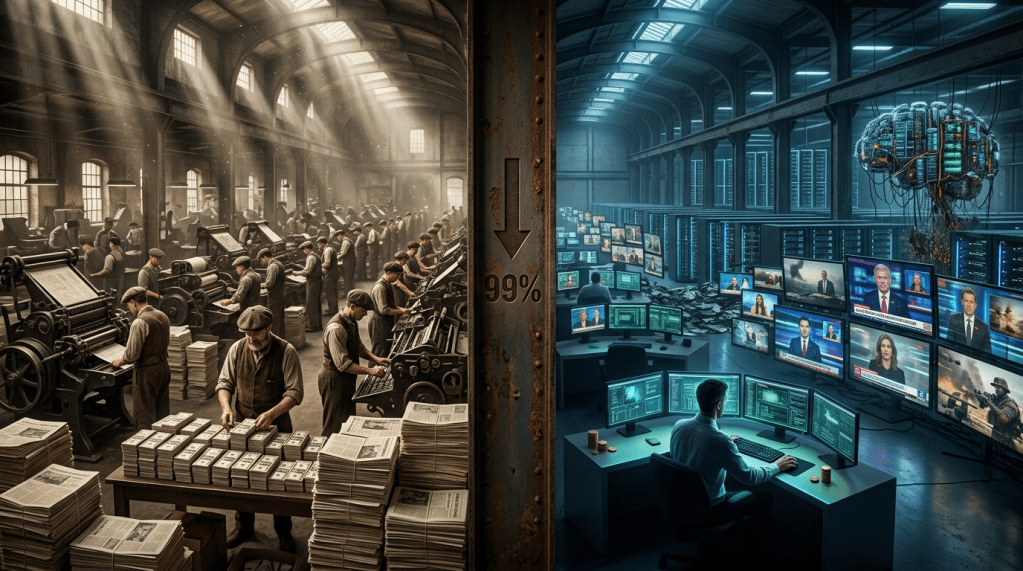

It used to cost money, time, and skilled operators to run a disinformation campaign at strategic scale. Generative AI has made convincing false content cheaper than truth. That is not a metaphor. It is the most significant structural change in the information warfare landscape since the invention of mass printing — and the institutions designed to counter it are calibrated for the world that preceded it.

By Vladimir Tsakanyan, PhD · Center for Cyber Diplomacy and International Security · cybercenter.space

In March 2026, a coordinated influence operation on TikTok deployed synthetic news anchors, deepfake-style celebrity endorsements, and networks of inauthentic accounts to amplify pro-Orbán narratives ahead of Hungary’s April elections. The operation ran parallel to the Russian “Matryoshka” campaign on X and Telegram. Neither required a significant budget by the standards of traditional influence operations. Neither required skilled human operators at anything approaching the scale that conventional disinformation campaigns demanded a decade ago. Both ran at a velocity and a geographic granularity — targeting Hungarian communities in diaspora communities abroad as well as domestic audiences — that no pre-AI operation could have achieved without months of preparation and significant financial investment.

This is what generative AI has done to the economics of disinformation. It has not invented a new category of threat. Propaganda, fabricated imagery, and coordinated inauthentic behaviour are as old as political conflict. What it has done is collapse the three constraints that historically limited who could conduct information operations at strategic scale: the cost of content production, the time required to localise and adapt content to specific audiences, and the number of skilled human operators required to sustain a campaign at volume. All three have been reduced to fractions of their pre-AI levels. The strategic implications of that reduction have not yet been fully absorbed by the governments, the regulatory frameworks, or the platform companies attempting to address the problem.

The term that has emerged to describe the resulting phenomenon — slopaganda, a blend of “slop” (the low-quality AI-generated content flooding information environments) and “propaganda” — captures something analytically important beyond its neologistic appeal. It names the characteristic of the current disinformation environment that distinguishes it most sharply from its predecessors: not sophistication, but volume. The defining challenge is no longer identifying the skilled operator behind a carefully crafted operation. It is managing an environment in which the volume of synthetic content has overwhelmed the capacity of any fact-checking, content moderation, or counter-narrative system to address it comprehensively.

Before and After: The Economics of Lying at Scale

The economic comparison is instructive and under-examined. A sophisticated pre-AI influence operation — the kind documented in the Mueller Report on Russian interference in the 2016 US election — required: a team of skilled writers and social media operators fluent in the target culture; graphic designers capable of producing convincing visual content; translators for multilingual campaigns; account management infrastructure for networks of inauthentic identities; and sustained financial investment over months to build the audience relationships that made content amplification possible. The Internet Research Agency’s operation, assessed to have reached tens of millions of Americans, employed hundreds of people and cost millions of dollars over several years.

The 2026 equivalent does not require any of those things at equivalent scale. NewsGuard’s tracking found over 2,000 AI-generated news sites operating across sixteen languages by mid-2025, running almost entirely without human oversight. Leading AI chatbots were relaying false claims thirty-five percent of the time in August 2025 — up from eighteen percent the prior year — as their training data became progressively contaminated with AI-generated content. The number of deepfake videos available online was estimated at eight million by 2025, up from five hundred thousand in 2023. A single operator with access to commercial generative AI tools can now produce localised, culturally adapted disinformation content in dozens of languages at a pace and volume that would have required the full resources of a state intelligence agency a decade ago.

The implications for the threat landscape are structural, not incremental. When disinformation was expensive and labour-intensive, it was primarily a state capability — accessible to intelligence agencies with the resources to sustain large-scale operations. When disinformation becomes cheap and largely automated, it becomes accessible to non-state actors, criminal organisations, foreign influence operations operating on minimal budgets, and domestic political actors who previously lacked the resources to run professional influence campaigns. The democratisation of disinformation production is not a figure of speech. It is a measurable shift in who can conduct information warfare effectively, and against whom.

Analyst note

The Atlantic Council’s DFRLab assessment of AI poisoning of training data is the most consequential and least publicly discussed dimension of the current threat. The mechanism: disinformation campaigns deliberately target the training datasets used to produce the next generation of AI models, embedding false narratives and fabricated content into the corpus from which models learn. The two-year lag in AI training data means that content produced in 2024 and 2025 is only now beginning to manifest in model outputs — and the disinformation campaigns specifically designed to poison that corpus are beginning to produce measurable effects. Unlike surface-level disinformation, which can in principle be removed from platforms, training data contamination is structural: it produces models that generate false content not because they are instructed to lie, but because they have learned from a corpus that contains more falsehoods than their designers realise. It cannot be audited in deployed models with current technology. It cannot be remediated without retraining from clean data — an expensive, time-consuming process that the adversaries designing the poisoning campaigns are counting on defenders not completing before the next electoral cycle.

The Iran Conflict as Live Laboratory

The information environment surrounding the Iran conflict of 2026 provided the most extensive real-time demonstration yet of what AI-enabled slopaganda looks like at operational scale in a kinetic conflict. Easy and cheap access to AI video generation technologies flooded social media with fabricated deepfake videos and photos of combat, civilian impacts, and statements by political and military leaders within hours of the strikes beginning. Iran produced deepfakes of downed American fighter jets being paraded through Tehran. Pro-regime accounts deployed fabricated content through fake Western influencer personas designed to reach audiences who would be sceptical of overt Iranian state media. Russia amplified the most effective content through its established bot and disinformation networks. Chinese state media echoed anti-US narratives, including one false claim that Iran had shot down an American F-15 jet.

The competitive dynamic that emerged was not, as conventional analysis might suggest, primarily between truthful and false content. It was between competing false narratives — each side producing synthetic content designed to advance its preferred account of what was happening, while authentic journalism and official communications were simultaneously drowned in volume and contaminated by proximity. Euronews documented the pattern precisely: AI-generated content was being used by all sides and their supporters to win “hearts and minds,” with Iranian accounts using synthetic media to exaggerate military successes and US-aligned accounts producing content that Euronews analysts described as a “memeification of communication designed to appeal to a far-right aesthetic that rejects empathy in favour of humiliation.” The information environment of the conflict was not an adversarial contest between truth and falsehood. It was a multi-sided production contest in which speed, volume, and emotional resonance determined reach — and authenticity was neither a necessary condition nor a reliable advantage.

The strategic implications of this dynamic extend well beyond the Iran conflict. If the information environment of a kinetic conflict can be saturated with synthetic content at sufficient volume to prevent any actor — including the parties to the conflict — from confidently assessing how their actions are being received by the audiences that matter, then the traditional role of information in conflict management is undermined. Escalation management depends on decision-makers having access to reasonably accurate assessments of adversary intent, domestic political constraints, and third-party reactions. When the information environment of a conflict is deliberately and successfully polluted with slopaganda, those assessments become unreliable — and the risk of miscalculation, the risk that a decision-maker acts on a false picture of the political environment and produces consequences that no party actually wanted, increases in ways that no kinetic capability can prevent.

The information environment of a modern conflict is not a contest between truth and falsehood. It is a production contest. Speed, volume, and emotional resonance determine reach. Authenticity is neither a necessary condition nor a reliable advantage.

Why AI-Powered Detection Is Losing the Race

The conventional response to the AI disinformation threat has been to develop AI-powered detection tools — systems capable of identifying synthetic content, watermarking AI-generated media, and flagging suspected deepfakes for human review or automatic removal. These tools exist, are improving, and are genuinely useful in specific, constrained deployment contexts. They are losing the race against the production systems they are designed to detect, and the structural reason for this is not technical but economic.

Detection tools are developed by well-resourced technology companies and research institutions operating on deliberate, accountable development cycles. Production tools are developed by a global open-source ecosystem operating on competitive commercial incentives, with no constraint on the sophistication of the outputs they enable. When Google’s SynthID watermarking system tags AI-generated media, adversarial actors migrate to unmarked open-source models — a migration that takes hours, not months, and that resets the detection advantage to zero without requiring any corresponding investment in new production capability. The asymmetry is structural: every advance in detection provides a defined target for evasion; every advance in production expands the attack surface that detection must cover.

The human evaluator problem compounds the structural detection gap. A meta-analysis of fifty-six studies found that human evaluators performed little better than chance at detecting deepfake videos. A study published in 2026 found that viewers believed AI-generated content even when it was explicitly labelled as AI-generated. The EU AI Act’s Article 50 — requiring labelling of AI-generated content and synthetic interactions, enforceable from August 2026 — is grounded in the assumption that labels will change user behaviour. The empirical evidence suggests they will not, at least not at the scale and speed required to prevent synthetic content from shaping the information environment before corrections can be applied.

Analyst note

The Hungary case from April 2026 illustrated a specific tactical evolution that deserves attention: the use of “Reality Bombs” — coordinated bursts of hyper-realistic, entirely fabricated, highly localised news reports and social media posts targeting specific communities simultaneously. The tactic’s innovation is geographic and demographic specificity: rather than distributing a single false narrative widely, it produces dozens of locally adapted versions simultaneously, each calibrated to the specific political anxieties, cultural references, and information sources of a particular community. This approach is not detectable as a coordinated campaign by conventional monitoring systems designed to look for identical or near-identical content across platforms. Each localised version is sufficiently distinct to evade pattern-matching detection, while the underlying narrative agenda is consistent across the entire operation. It is disinformation at the granularity of individual communities, produced at the volume of a mass broadcast. No pre-AI operation could have sustained this approach at scale.

What the Regulatory Response Addresses — and What It Does Not

The regulatory response to AI-generated disinformation in 2026 is more extensive than at any previous point, and more inadequate to the actual threat than its architects have been willing to publicly acknowledge. The EU AI Act’s labelling requirements, the UK’s consideration of mandatory AI content labelling under copyright reform, India’s February 2026 amendment requiring metadata tracing for AI-generated content, and South Korea’s AI Basic Act that entered force in January 2026 collectively represent a genuine shift in the regulatory ambition around AI-generated content. They share a structural limitation that none of them, individually or collectively, resolves.

Labelling and transparency requirements assume that the platform or producer of AI-generated content is identifiable, subject to the relevant jurisdiction, and motivated by commercial interests that create compliance incentives. State-sponsored disinformation operations meet none of these criteria. The Iranian actors producing deepfakes for the Iran conflict, the Russian Matryoshka operation running on X and Telegram, the Chinese state media amplifying fabricated claims about American fighter jets — none of them are subject to EU, UK, Indian, or South Korean AI content regulations. None of them have compliance incentives. None of them will apply watermarks or labels to their output because a regulatory framework in a foreign jurisdiction requires it.

The regulatory tools being developed are tools for governing legitimate commercial use of AI in information environments. They are not tools for governing adversarial state use of AI in information warfare. This is not a criticism of the regulatory effort — labelling requirements for commercial AI content are genuinely valuable for reducing accidental disinformation and for building the baseline of consumer awareness that supports media literacy. It is a reminder that the most consequential dimension of the slopaganda problem is the one that commercial AI governance frameworks are structurally unable to address: the deliberate, state-directed production of synthetic content for strategic information warfare purposes, conducted outside the reach of any regulatory framework that currently exists or is currently under development.

Bottom line assessment

Generative AI has restructured the economics of information warfare as fundamentally as the printing press restructured the economics of mass communication — by collapsing the cost of production to a level that makes the capability accessible to actors who previously lacked it, and by enabling a volume of output that overwhelms the systems designed to manage it. The slopaganda environment of 2026 — documented in the Hungary elections, the Iran conflict information space, the contamination of AI training data, and the proliferation of AI news sites operating without human oversight — is not a preview of a future threat. It is the operational reality of the present one. The regulatory frameworks being developed to address it are calibrated for commercial AI governance, not adversarial information warfare. The detection tools being deployed to counter it are losing the structural race against production systems operating without comparable constraints. The human evaluators being asked to identify synthetic content are performing little better than chance. The gap between the scale of the threat and the scale of the response is widening. Closing it requires confronting the structural economics that AI has created — not just the specific content it is producing.

This is Article 3 of the series “Disinformation & Information Warfare.” Previous: The Axis of Narrative — How Russia and China Built the World’s Most Dangerous Disinformation Alliance. Next: The Permanent Campaign — Elections, Epistemic Interference, and the Slow Death of the Democratic Information Environment. All articles available at cybercenter.space.

AI Disinformation Slopaganda Deepfakes Information Warfare Generative AI Cognitive Warfare AI Governance Vladimir Tsakanyan

Leave a comment